Nected is an all-in-one platform that provides building blocks like rule engine, workflow automation, A/B testing, and more—allowing teams to build backend logic flows faster, experiment more, and iterate continuously. Since it’s designed for customer-facing and mission-critical workloads, ensuring stability, performance, and scalability under load is a top priority.

This updated blog provides fresh insights into Nected’s performance, following our latest benchmarking round, where we tested the system on a PostgreSQL-only setup.

We’ll walk through the benchmark setup, test parameters, results, and key observations that highlight how Nected performs under sustained, high-throughput workloads.

If you want to read the

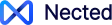

Nected Architecture Overview

Nected's architecture is fundamentally designed to optimize task execution in a no-code/low-code environment. This section breaks down the key components of the platform, offering a clear view of how each contributes to overall performance and scalability.

Core Architecture Overview:

The architecture of Nected is structured to efficiently manage and execute backend logic flows. It comprises several critical services, each playing a unique role in the system:

- Router Service: This service acts as the initial point of contact for all incoming tasks. It routes tasks to the appropriate services for execution, ensuring efficient distribution of workload.

- Task Manager: The Task Manager is crucial for overseeing the lifecycle of each task. It monitors task status, manages task queues, and coordinates between different services to ensure smooth execution.

- Executor Service: Responsible for the actual execution of tasks, the Executor Service processes the backend logic as defined in the tasks. It's designed for high efficiency and low latency in task processing.

This diagram provides a visual representation of the flow of tasks through the system and the interaction between different services.

Scalability and Performance:

Each component in Nected’s architecture is designed with scalability in mind. The platform can dynamically adjust resource allocation based on the incoming workload. This flexibility is key to maintaining performance under varying load conditions.

- Scalable Router Service: It can scale up to handle high volumes of incoming requests, ensuring that task routing does not become a bottleneck.

- Efficient Task Management: The Task Manager's ability to efficiently queue and monitor tasks plays a vital role in maintaining system performance even as the number of tasks increases.

- High-Performance Executor Service: Optimized for rapid task execution, this service ensures that the processing time remains minimal, a critical factor in overall system responsiveness.

In summary, Nected’s architecture is a balanced ecosystem of services, each designed to contribute to the platform's overall efficiency and scalability. This structure is pivotal in ensuring that Nected can handle increasing loads without compromising on performance.

Benchmark Setup

For this benchmark, we designed a PostgreSQL-only performance test to measure Nected’s throughput, latency, and overall system behavior under controlled, high-load conditions.

This setup helps us isolate and assess the system’s raw execution efficiency when using PostgreSQL as the primary data store.

Infrastructure Configuration

We deployed the services on a Kubernetes cluster with 40 vCPUs and paired it with AWS RDS PostgreSQL (m7g.8xlarge) and AWS ElastiCache Redis (cache.m7g.medium) to simulate a realistic, production-grade environment.

Here’s the infrastructure setup:

Service Resource Allocation

Each Nected service was assigned specific CPU and pod configurations to reflect real operational conditions.

The table below shows the resource distribution across services in the cluster:

High-Throughput Configuration Parameters

To maximize concurrency and simulate a high-performance environment, the system was tuned with aggressive throughput parameters.

These configurations ensured the Temporal workers and executors could handle thousands of concurrent tasks.

Load Testing Configuration (JMeter)

The load test was executed using Apache JMeter to simulate distributed client requests from multiple nodes.

Here’s the testing setup that helped us generate a sustained, high-concurrency workload:

Performance Results and Observations

Overall System Performance

The Nected service showed excellent stability and high throughput throughout the benchmarking window.

Over a 3-minute test run, the system processed 144,000 requests with 0% failure rate, demonstrating strong reliability.

- Total requests processed: 144,000

- Error rate: 0.00%

- APDEX score: 0.905 (Excellent)

An APDEX (Application Performance Index) score above 0.9 indicates a highly satisfying user experience, meaning over 90% of requests met acceptable response times.

Latency and Response Time

Latency directly affects perceived performance, especially in customer-facing workflows.

Here’s how Nected performed across different percentiles:

Even at the 95th and 99th percentile, response times remained well within acceptable thresholds for backend automation workloads.The few isolated spikes likely resulted from GC cycles or connection pooling overhead, not sustained issues.

Throughput and Scalability

Throughput represents how many requests the system can handle per second.

Nected achieved steady throughput of ~887 RPS across all six JMeter slave nodes, indicating excellent horizontal scalability.

Key network performance insights:

- Throughput: ~887 RPS sustained

- Network utilization: 710 KB/sec received, 210 KB/sec sent

- Payload distribution: Balanced and efficient, no network bottlenecks detected

- Infrastructure: 40-core Kubernetes cluster + m7g.8xlarge PostgreSQL + Redis cache.m7g.medium comfortably supported load

System Stability

Stability was a standout highlight of this benchmark.

No request failures, crashes, or resource bottlenecks were recorded during the 3-minute high-load window.

Additional observations:

- Temporal worker scaling handled concurrency efficiently.

- Executor configurations (pollers, concurrent execution, and RPS limits) ensured smooth task distribution.

- Balanced pod utilization across Executor, History, and Frontend services confirmed healthy orchestration.

Conclusion

The PostgreSQL benchmark reaffirms Nected’s core strengths in stability, scalability, and performance.

With a 40-core Kubernetes cluster and optimized Temporal configurations, the platform smoothly handled nearly 900 requests per second—without a single error.

The key takeaways are:

- Stability: Zero failures under sustained load

- Performance: 378 ms average response time, APDEX 0.905

- Scalability: Balanced pod utilization and no resource saturation

This benchmark demonstrates that Nected can reliably power large-scale backend logic automation with consistency, low latency, and high throughput—even under demanding production-like loads.

It’s another validation of our ongoing commitment to performance excellence as we continue scaling Nected for enterprise-grade workloads.

.svg)

.webp)

.png)

.webp)

.svg.webp)

.webp)

%2520(1).webp)

.webp)

.webp)

%20Medium.jpeg)

%20(1).webp)