We’ve all built that one service. The one that started as a clean, simple API but slowly mutated into a massive, tangled web of if/else statements.

Usually, it's the checkout service, the pricing module, or a risk assessment service. First, business asks for a simple discount logic. Then, they want discounts based on user tiers. Next week, it’s user tiers plus geographic location, but only if the cart value is over $50 and it's a Tuesday.

Before you know it, your microservice is just a glorified dumping ground for business rules. Every time a product manager wants to tweak a discount, you have to run a full build, run integration tests, and deploy a core microservice. This is exactly the kind of tight coupling that microservices were supposed to prevent.

The fix for this architectural headache is extracting that volatile logic into a separate layer. This brings us to the concept of integrating a decision engine in microservices.

Let’s look at how to actually architect this without turning your infrastructure into a distributed monolith.

What Are We Actually Talking About?

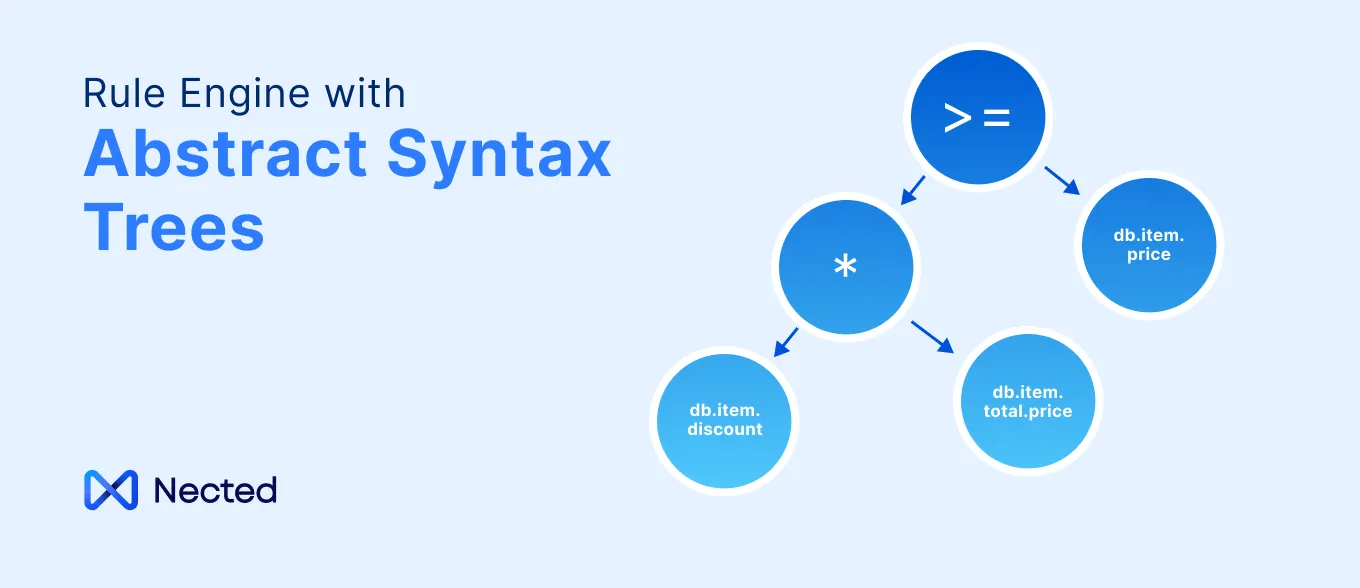

A decision engine (or business rules engine) is a piece of software that executes rules separate from your core application code. You feed it a context (data), it evaluates that context against a predefined set of rules, and it returns a decision.

In a monolithic architecture, you'd usually just import a library like Drools directly into your massive codebase. But when dealing with distributed systems, integrating a microservices decision engine requires a bit more architectural thought. You have to figure out where the engine lives, how it gets the data it needs to make decisions, and how you manage network latency.

Also Read: Top Decision Intelligence Platforms

The "Why": Pain Points You're Trying to Solve

If you're wondering whether you actually need to introduce this complexity, look for these smells in your current setup:

- Deployment bottlenecks: You are deploying heavy, stateful services just to change a static threshold variable (like changing a fraud score limit from 80 to 85).

- Scattered logic: Service A calculates a discount one way, Service B uses a slightly different, outdated logic.

- Black-box business logic: Product managers have to ask engineers to read the code to tell them what the current active business rules are.

By moving rules into a decision engine, you separate the "what" (the business rules) from the "how" (the execution and plumbing).

Architecture Patterns for Integration

You can't just drop a rules engine into a Kubernetes cluster and hope for the best. How your services communicate with the engine dictates your system's performance and reliability. Here are the three main ways engineers wire this up.

Pattern 1: The Centralized Decision Service (Synchronous)

This is the most common starting point. You wrap your decision engine (like Camunda DMN, Drools, or a custom evaluator) in a lightweight web server and deploy it as its own independent microservice.

When your OrderService needs to calculate a discount, it makes an HTTP REST or gRPC call to the DecisionService, passing the cart details as the payload.

The Pros:

- Single source of truth. All rules live in one place.

- You can update rules on the fly without touching the calling services.

- Polyglot friendly. Your Node.js, Go, and Java services can all call the same HTTP endpoint.

The Cons:

- The network hop. If you have strict latency budgets (e.g., ad bidding or high-frequency trading), adding a 20ms network hop for every decision is a non-starter.

- It’s a massive single point of failure. If the Decision Service goes down, everything that depends on it stops working.

Pattern 2: The Sidecar or Embedded Engine

If the network hop of a centralized service is killing your latency, you move the engine closer to the compute.

In the sidecar model, you deploy the decision engine as a separate container within the same Kubernetes Pod as your microservice. They communicate over localhost, cutting network latency down to almost zero.

Alternatively, if youss share a tech stack (e.g., everything is in Go), you can compile the rule evaluator directly into the microservices as a shared library, while keeping the rules themselves centralized in a database or object store. The library periodically polls for rule updates and caches them in memory. Open Policy Agent (OPA) is heavily used in this exact configuration, though it's usually for authorization rather than business logic.

The Pros:

- Extremely fast. In-memory or localhost evaluations take microseconds.

- Better fault tolerance. If the central rule repository goes down, the local service can just use the last cached version of the rules.

The Cons:

- Harder to guarantee absolute consistency. Service A might fetch the updated rules at 10:00 AM, while Service B doesn't poll again until 10:05 AM. During those 5 minutes, your system is in a split-brain state regarding business logic.

Pattern 3: Event-Driven Decisions (Asynchronous)

Not all decisions need to happen right this second. Think about fraud detection, user segmentation, or sending a "cart abandoned" email.

Instead of a synchronous API call, your core microservices just emit state changes to a Kafka topic or RabbitMQ exchange. The Decision Engine acts as a consumer. It reads the event, runs the rules, and if an action is required, it emits a new event.

The Pros:

- Completely unblocks the user request thread.

- highly resilient. If the engine crashes, the events just sit in the queue waiting until it recovers.

The Cons:

- Useless for blocking decisions. If you need to know right now if a user has sufficient balance or permissions to proceed, async won't work.

Also Read: What is Microservices Architecture?

The Data Hydration Problem (The Hard Part)

Here is where most implementations fall apart.

To make a decision, the engine needs data. Let's say the rule is: "If the user has been a premium member for over a year and has zero support tickets in the last 30 days, give them a 10% discount."

Where does the decision engine get the "member status" and "support ticket count"?

You have two choices:

1. The Caller Gathers the Data (Fat Payloads)The OrderService queries the UserService and the SupportService, bundles all that data into a massive JSON payload, and sends it to the Decision Engine.

- Why it's good: The Decision Engine remains stateless and fast. It just evaluates whatever you give it.

- Why it sucks: The calling service has to know exactly what data the engine needs. If business adds a rule tomorrow checking the user's location, you have to update the OrderService to start fetching location data. This completely defeats the purpose of decoupling.

2. The Engine Fetches the Data (Smart Engine)The caller just passes a userId and cartId. The Decision Engine reaches out to the UserService and SupportService itself to grab what it needs to evaluate the active rules.

- Why it's good: The caller stays dumb. You can change rules all day without updating the calling services.

- Why it sucks: Your decision engine just became an orchestrator and a distributed monolith. It now has dependencies on half your infrastructure. If the SupportService is slow, the Decision Engine is slow.

The pragmatic middle ground: Use the "Fat Payload" for data immediately available in the current transaction context, and allow the Decision Engine to make lightweight read-only queries for missing context, heavily backed by a caching layer (like Redis).

Build vs. Buy: Tooling Choices

When engineers first look at this problem, the instinct is often to write a custom JSON-based rule parser. Don't do it. Maintaining a custom syntax tree parser, UI, and versioning system is a massive time sink.

To put the true cost of building in-house into perspective, here is a 5-year comparison of total ownership costs and implementation timelines:

Rather than taking on that technical debt, evaluate the standard off-the-shelf tooling choices.

Read more from here: Build vs Buy

Testing and Versioning Rules

Treat your rules like code. This means they need to live in source control.

A common anti-pattern is giving product managers access to a production UI where they can change rules on the fly. This is terrifying. A typo in a rule can zero out prices across your application.

Implement GitOps for your decisions. Store rules as YAML, JSON, or DMN files in a Git repository. When a change is pushed, trigger a CI pipeline. The pipeline should run automated tests against the rules—passing mock JSON payloads and asserting the expected output—before the updated rules get pulled by the decision engine.

Also, always version your rules. When the calling service requests a decision, the engine's response should include the version of the ruleset that was used. This is crucial for debugging. When a customer complains about an odd charge, you need to know exactly which logic was active at that specific millisecond.

Also Read: Top 5 Decision Engine for your Business in 2026

The Bottom Line

Pulling business logic out of your microservices isn't a silver bullet. You are trading code complexity in your services for operational complexity in your architecture.

But at a certain scale, having a dedicated engine is the only way to keep your codebase sane. Start small. Pick one highly volatile domain, like pricing, risk, or compliance. And abstract it behind a simple interface. Figure out your data hydration strategy early, keep your network hops in check, and whatever you do, keep your rules in source control.

FAQs

1. When should I completely avoid using a decision engine?

If your rules only change once a year, or if they rely heavily on the internal, transient state of a single service. Don't add an entire architectural layer and network latency just because a blog post told you to decouple things. Keep it simple until the pain of deployment forces your hand.

2. Is a decision engine the same thing as an API Gateway?

Not at all. Gateways handle cross-cutting infrastructure concerns at the edge—routing, rate limiting, and auth. Decision engines sit deeper in your stack and evaluate pure, domain-specific business logic, like whether a specific user profile qualifies for a specific insurance premium.

3. How do we handle testing if the rules are decoupled from the code?

You treat the rules exactly like code. Store them in a Git repo and run a CI pipeline that throws mock JSON payloads at the rule evaluator. If the output doesn't match your assertions, the build fails and the rules don't get merged or deployed.

4. What's the real performance hit of a centralized rules service?

You are looking at a standard network hop, so expect an extra 10-30ms per HTTP/gRPC call. If you're building a real-time bidding system, that's fatal. If you're calculating a shopping cart total, no one will notice. Use the sidecar pattern if latency is a critical constraint.

5. Should we let Product Managers edit the rules directly in production?

Absolutely not. Giving non-engineers a UI to blindly push logic changes to production is a fast track to an outage. They can draft the rules in a friendly UI, but that UI must commit to a Git repo to trigger your automated testing pipeline before anything goes live.

.svg)

.png)

.svg.webp)

_result.webp)

.webp)

%20(1).webp)