There's a moment in most growing businesses where the volume of decisions just outpaces the people making them. Applications pile up. Approvals slow down. Exceptions get missed. The process that worked fine at one scale completely falls apart at the next.

An AI decision engine is one of the more practical answers to that problem. Not the flashiest technology, but one of the more useful ones.

What Is an AI Decision Engine?

At its core, it's a system that makes decisions automatically — using data, rules, and machine learning models — instead of routing everything to a human reviewer.

You feed it inputs. A customer profile. A transaction. A support request. The engine evaluates that data against whatever logic it's been given — predefined rules, trained models, or a mix of both — and returns a decision. Approve. Decline. Escalate. Route to team B. Offer this discount tier instead.

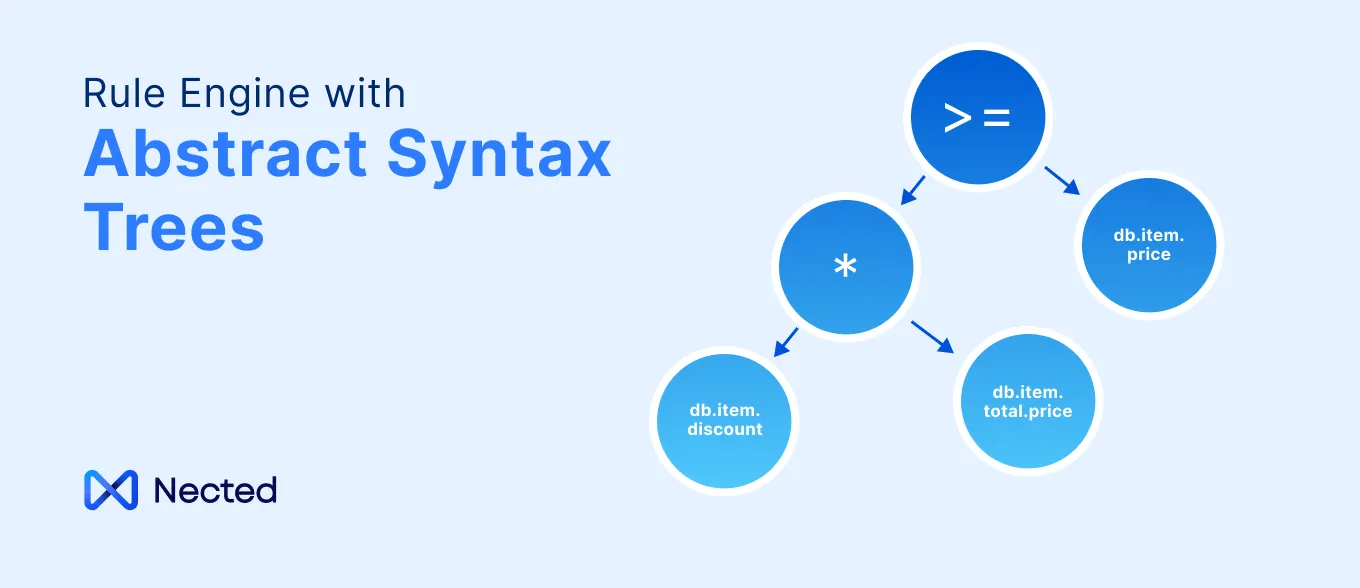

The "AI" part is what separates it from older rule-based systems. Traditional decision engines ran purely on if-then logic. That works fine when the world is predictable. When it isn't — when fraud patterns shift, when customer behavior changes, when edge cases multiply — static rules start to break down. AI decision engines can adapt. They learn from new data. They catch patterns humans wouldn't think to write a rule for.

That's the meaningful difference.

Also Read: What is Decision Engine?

How AI Decision Engine Actually Works?

Most AI decision engines operate in a few layers.

First, there's data ingestion. The engine pulls in whatever information is relevant — from internal systems, third-party APIs, real-time feeds. The quality of that input matters a lot. Garbage in, garbage out still applies.

Then the decision logic runs. This is usually a combination of hard rules (things like regulatory requirements or business policies that can't flex) and model-based scoring (where the AI assesses probability or risk based on patterns in training data). Some engines let these layers interact — a model score feeds into a rule, or a rule triggers a different model depending on context.

Finally, the output. A decision, a score, a recommendation — whatever the use case requires. Usually with some kind of explanation attached, especially in regulated industries where you need to justify why a decision was made.

The whole thing can happen in milliseconds. That's the operational point. Decisions that used to take days — because someone had to manually review a file — now happen instantly, consistently, at whatever volume you're running.

Where Businesses Are Actually Using This?

Credit and lending is probably the most mature use case. AI decision engines assess loan applications, assign risk scores, and make or recommend approval decisions based on hundreds of variables — not just the three or four a human reviewer might check. The speed alone changes customer experience. Applications that used to take days get answered in minutes.

Fraud detection is where the adaptive quality of AI really earns its keep. Fraud patterns change constantly. Rule-based systems can't keep up. AI models retrain on new data, pick up emerging patterns, and flag suspicious transactions in real time without blocking legitimate ones. This is a hard problem. It's also one of the clearest success stories for AI decision engines.

Insurance underwriting is another big one. Pricing a policy involves a lot of variables. AI engines can process far more of them than manual underwriting workflows, price more accurately, and handle the volume that most insurers couldn't staff for manually.

Outside financial services — healthcare organizations use AI decision engines for patient routing and care pathway recommendations. E-commerce companies use them for dynamic pricing, return fraud detection, and personalized offers. HR technology platforms have started applying them to candidate screening, though this one comes with legitimate concerns about bias that aren't fully resolved yet.

Rule-Based vs. AI-Driven: When Each Makes Sense

This distinction matters more than most vendor comparisons acknowledge.

Pure rule-based decision engines are predictable. You can read the logic. You can audit exactly why a decision was made. In heavily regulated environments, that auditability is not optional — it's a compliance requirement. Rules-based systems are also easier to explain to regulators and customers.

AI-driven decision engines are more powerful in complex or changing environments. They find patterns humans wouldn't think to encode as rules. They handle edge cases more gracefully. They improve over time.

The realistic answer for most organizations is both. Hard rules for compliance-critical logic that must be transparent and consistent. AI scoring for the parts where nuance and pattern recognition add real value. Most mature AI decision engines are built to support this hybrid approach — which is worth checking for when evaluating platforms.

Also Read: Decision Engine vs Rule Engine

What Makes a Good AI Decision Engine

A few things that actually matter, beyond the marketing materials.

Explainability. Can the engine tell you why it made a decision? "The model said so" isn't good enough when a customer asks why they were declined, or when a regulator asks the same question. Look for platforms with built-in explanation features — SHAP values, decision traces, something concrete.

Model monitoring. AI models drift. The patterns they learned on stop reflecting current reality. A good engine has monitoring built in — alerts when model performance degrades, tools for retraining or updating models without taking the system offline.

Latency under load. Demo environments always look fast. Ask about performance at your actual expected volume. Real-time fraud detection at 10,000 transactions per second is a very different engineering problem than batch processing overnight.

Integration depth. The engine is only as good as the data it can access. How easily does it connect to your existing data sources? What does the API look like? This part often gets underestimated during evaluation.

Human override and escalation. Not everything should be fully automated. Good systems make it easy to route edge cases to human review, and they capture the outcomes of those human decisions to feed back into model improvement.

The Bias Problem — Worth Being Direct About

AI decision engines inherit bias from training data. If historical decisions were discriminatory — in lending, hiring, criminal justice — a model trained on that history will reproduce those patterns. Sometimes amplify them.

This isn't a theoretical concern. There have been real cases in credit, hiring, and healthcare where AI decision systems produced outcomes that were discriminatory in ways that weren't immediately obvious from the model's inputs.

The industry has responded with fairness tooling — bias audits, demographic parity checks, counterfactual testing. These help. They don't fully solve the problem. Organizations deploying AI decision engines in high-stakes domains should treat bias monitoring as ongoing operational work, not a one-time checkbox.

This part often gets glossed over in product demos.

Also Read: Decision Engine Design Pattern

Implementation: What Actually Takes Time

Getting an AI decision engine running takes longer than most vendors imply. The technology setup is usually the faster part.

The slower part is decision design. What exactly should the engine decide? What are the acceptable error rates in each direction — false positives versus false negatives? Who owns the model? Who approves rule changes? What's the escalation path when the engine isn't sure?

These are organizational questions, not technical ones. They require input from operations, compliance, legal, and often the business units whose workflows are changing. Skipping those conversations upfront leads to systems that technically work but practically don't — because the logic doesn't match how the business actually wants to operate.

Data readiness also takes time. Most organizations discover during AI implementation that their historical data is messier than expected — incomplete records, inconsistent labeling, gaps in coverage for certain customer segments. Cleaning and preparing training data is rarely glamorous work, but it's where a lot of the outcome quality is determined.

A Few Questions Worth Asking Vendors

Before you commit to any platform, some things that are worth pinning down:

What does the model training process look like — do you own your models, or is the vendor's black box doing something proprietary you can't audit? How does the platform handle regulatory changes that require logic updates fast? What's the process for rolling back a model that starts behaving unexpectedly? And — this one's important — who's responsible when the engine makes a wrong decision that causes real harm?

That last question tends to reveal a lot about how a vendor thinks about accountability.

AI decision engines are genuinely useful. For the right use cases — high-volume, pattern-rich, data-heavy decisions — they're hard to replicate with human review at scale. But they're not neutral tools. They encode assumptions, inherit data quality problems, and require ongoing oversight to stay reliable.

The organizations that get the most out of them are the ones that treat them as operational systems requiring continuous attention — not infrastructure you deploy and walk away from.

Frequently Asked Questions

What is an AI decision engine in simple terms?

It's software that makes decisions automatically using data and machine learning — instead of a human reviewing each case. You give it the relevant information, it evaluates that against trained models and business rules, and it produces an outcome: approve, decline, flag, route, price, recommend. The AI part means it can handle complexity and adapt over time, rather than just following fixed if-then rules. Think of it as automating the judgment part of a process, not just the task sequence.

How is an AI decision engine different from a regular rule-based engine?

A rule-based engine follows logic that someone explicitly wrote: if X and Y, then Z. It's predictable and easy to audit, but it can't handle what it wasn't programmed for. An AI decision engine uses models trained on data to recognize patterns and make probabilistic assessments — it can catch fraud patterns that no one thought to write a rule for, or price risk more accurately than a spreadsheet formula could. In practice, most modern platforms combine both: rules for compliance-critical logic that has to be transparent, AI models for the parts where nuance adds value.

What industries use AI decision engines the most?

Financial services — lending, fraud detection, insurance underwriting — are the most established users. But healthcare, e-commerce, HR technology, and logistics have all adopted them for patient routing, dynamic pricing, candidate screening, and supply chain decisions respectively. The common thread is high decision volume with patterns in the data. If you're making thousands of similar judgment calls a week and those decisions follow detectable patterns, an AI decision engine is probably applicable.

Can an AI decision engine explain why it made a decision?

It depends on how it's built. This is called explainability, and it's genuinely variable across platforms. Some engines produce decision traces — showing exactly which rules fired and what the model scored. Others use techniques like SHAP values to attribute the decision to specific input features. Some are essentially black boxes. For regulated industries like lending or insurance, explainability isn't optional — regulators and applicants are legally entitled to know why a decision was made. If you're in one of those industries, explainability should be a hard requirement, not a nice-to-have, when evaluating platforms.

What are the biggest risks of using an AI decision engine?

Three stand out. First, bias — models trained on historical data can reproduce and amplify past discrimination, especially in credit, hiring, or healthcare decisions. This requires active monitoring, not just a one-time audit. Second, model drift — model performance degrades over time as real-world patterns change, and without monitoring, you may not notice until outcomes have already gone wrong. Third, over-reliance — automated systems create pressure to remove human review entirely, which can be fine for routine cases but dangerous for edge cases or high-stakes decisions where context matters in ways the model can't fully capture. The organizations that manage these risks best treat the engine as something requiring ongoing oversight, not a set-it-and-forget-it deployment.

.svg)

.png)

.svg.webp)

_result.webp)

.webp)

.webp)

%20(1).webp)