An inference engine is the reasoning core of an expert system. It applies rules to a knowledge base and turns static facts into decisions or new conclusions. That is the short version, and it’s usually the one people actually need.

Nected uses this idea in a practical way. The platform takes rules, runs them against incoming data, and produces outcomes that can be used in workflows, approvals, routing, and other business logic. Simple enough on paper. This is where things usually break in real systems, though, because scale and explainability matter just as much as the rule itself.

What is an Inference Engine?

An inference engine is a software component that applies logical rules to a knowledge base to derive new conclusions or decisions. It sits at the reasoning layer of an expert system or rule-based AI. Static rules go in. Decisions come out.

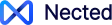

Core Components of Inference Engines

The engine usually depends on three parts: a knowledge base, the inference mechanism, and working memory. The knowledge base holds the rules and facts. The inference mechanism matches those rules against the data. Working memory keeps the temporary facts around while the engine is making its calls.

The usual flow is straightforward. Facts come in, rules get matched, one rule wins, the action runs, then the system checks whether anything else should fire. People often describe this as a Match-Resolve-Act cycle, which is the standard framing in a lot of expert system work. Same idea, different labels.

A simple IF-THEN example makes it easier to see:

- IF credit_score > 700 AND dti < 0.35 THEN approve loan

- Input facts: credit_score = 720, dti = 0.28

- Output: approve loan

That’s the basic loop. In bigger systems, the engine repeats it over and over as new facts arrive. The Rete algorithm is what keeps that from becoming slow and messy, especially when there are thousands of rules involved.

Benefits of Inference Engines

The main advantage is consistency. The same inputs produce the same result, which matters a lot in finance, support, and compliance workflows. It also removes a lot of manual checking.

Other benefits are pretty obvious once you’ve used one in production: faster decisions, easier scaling, and less room for people to apply rules differently. That last part often gets ignored until audit time.

How an Inference Engine Works: The 5-Step Cycle

Most inference engines follow a simple loop: input, match, select, execute, repeat. The academic version usually calls this Match-Resolve-Act. Nected follows that general pattern, but the important bit is what happens under the hood when the rule set gets large.

Here’s a small example.

- Step 1 — Input: a customer submits a loan application.

- Step 2 — Match: the engine checks credit score, income, and debt ratio against available rules.

- Step 3 — Select: if multiple rules match, the engine resolves which one should fire first.

- Step 4 — Execute: the chosen rule marks the application as approved or sent for review.

- Step 5 — Repeat: the engine checks whether any follow-up rules should run.

That cycle is the reason inference engines work well for business logic. They don’t just store rules. They actually do something with them, over and over, as the data changes.

Types of Inference Engines: Forward vs Backward Chaining

The two main styles are forward chaining and backward chaining. Forward chaining starts with facts and moves toward a conclusion. Backward chaining starts with a goal and works back to see what conditions need to be true.

A quick comparison helps.

If you’re deciding between them, use this:

- Use forward chaining when new data should trigger an immediate action.

- Use backward chaining when you already know the outcome you want.

- Use hybrid chaining when the workflow needs both reaction and validation.

Nected supports both approaches, which gives it more flexibility than systems that only do one or the other.

For a deeper comparison, see our forward vs backward chaining in depth.

The Rete Algorithm: How Modern Inference Engines Stay Fast

The Rete algorithm is the trick that keeps large rule sets usable. Instead of rechecking every rule from scratch whenever something changes, it caches partial matches in a network. That saves a lot of repeated work. Nected’s rule engine uses this approach internally.

Why does that matter? Because the performance difference gets obvious once you move beyond a small demo. A system with a few dozen rules is easy. A system with thousands of them is a different story. Nected can still evaluate decisions quickly because the engine is not doing full reprocessing every time a fact changes.

The short version: Rete makes rule evaluation scale better. It is one of the reasons Nected can handle large volumes of decisions without slowing to a crawl.

Inference Engine Examples: 5 Real-World Scenarios

People usually understand inference engines best when they see actual input, rules, and output. So here are five.

1. Loan eligibility

Facts: credit_score = 720, income = 80000, DTI = 0.28. Rule: IF score > 700 AND DTI < 0.35 THEN approve. Output: Approved.

2. Fraud detection

Facts: amount = £2,400, location = new_country, time = 2AM. Rule: IF amount is unusually high AND location is new THEN flag for review. Output: Flagged for review.

3. Medical diagnosis

Facts: fever = true, cough = true, oxygen = 94%. Rule: IF fever AND cough AND oxygen < 95 THEN suspect pneumonia. Output: Refer for chest X-ray.

4. E-commerce personalisation

Facts: customer_segment = premium, cart_value = £180. Rule: IF segment = premium AND cart > £150 THEN apply_discount = 10%. Output: Discount applied.

5. Customer routing in Nected

Facts: ticket_priority = high, customer_tier = enterprise, wait_time = 8min. Rule: IF priority = high AND tier = enterprise THEN route to senior agent. Output: Routed immediately.

Inference Engine in AI: From Expert Systems to LLMs (2026)

The phrase “inference engine in AI” gets used in two different ways now. In the classic sense, it means the reasoning layer inside an expert system. In the modern sense, it can also mean the runtime that executes a trained model, like an LLM or a neural network.

Those are not the same thing. Classic inference engines reason with symbolic rules. Modern AI inference engines run learned models to produce predictions. Nected sits firmly in the first camp. That gives it explainability and auditability, which matter a lot in business workflows.

If you’re comparing the two, the distinction is simple: rule-based inference is easier to inspect, while model inference is better for pattern-heavy prediction. Different tools, different jobs.

Inference Engine vs Rule Engine: Key Differences

These two terms get mixed up all the time. A rule engine manages the rules. An inference engine is the part that reasons over them and decides what should happen next.

You need inference when the system has to reason, not just execute a static rule. That matters in approvals, fraud checks, and anything where the next decision depends on more than one condition. Nected handles both sides here. It gives you rule authoring through decision tables and IF-ELSE logic, and it also supports inference through chaining and API-driven execution.

How Nected’s Inference Engine Works: Architecture & Capabilities

Nected’s setup is pretty direct: the knowledge base lives in the UI as decision tables and rule sets, the inference engine evaluates those rules using Rete-based forward chaining through API calls, and working memory holds the runtime facts for each request.

That gives you a few useful things. Business teams can author rules without touching code. Developers can call the engine from any app over REST. Versioning lets teams test changes without breaking production. And every decision can be logged, which is the part compliance teams always ask for later anyway.

A quick example:

curl -X POST https://api.nected.ai/v1/inference/evaluate \

-H "Authorization: Bearer YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"facts": {

"credit_score": 720,

"income": 80000,

"dti": 0.28

},

"rule_set_id": "loan-approval"

}'

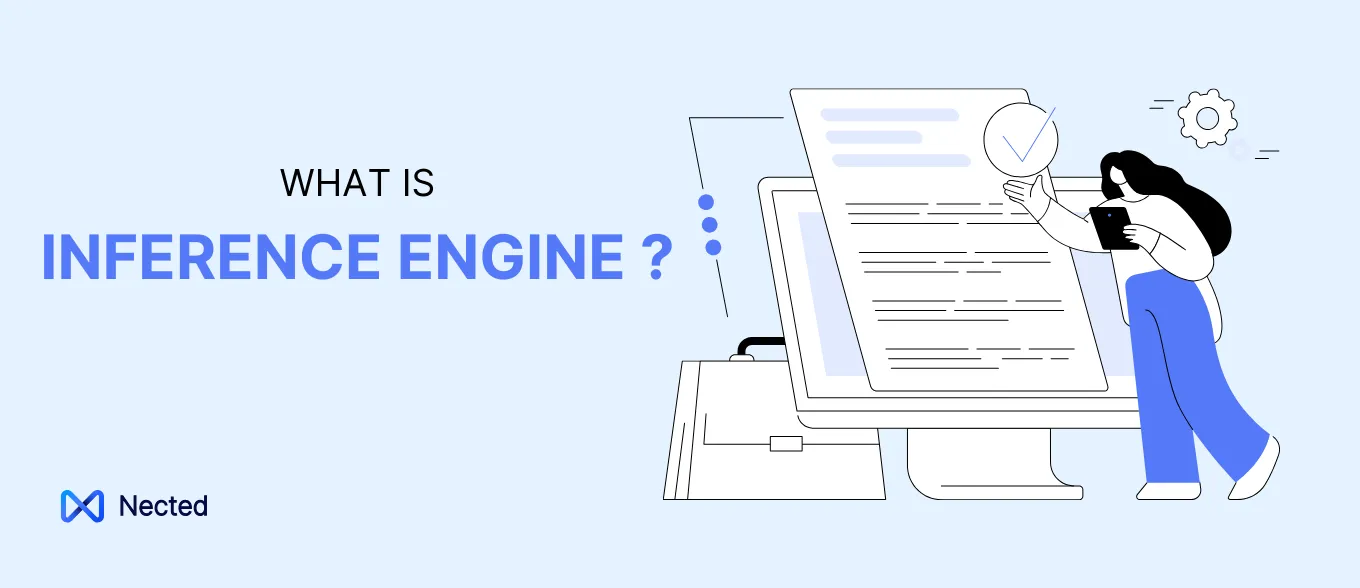

Unlike Drools, which is powerful but has a steeper Java-heavy learning curve, Nected is built to work for both developers and business users. The dashboard helps non-engineers edit logic. The API keeps it usable for product and engineering teams. You can see more in our Nected vs Drools inference engine comparison.

Inference Engine Applications by Industry

Financial services

Loan underwriting is one of the clearest uses for an inference engine. Rules can check credit score, income, and debt ratios in real time, then approve, reject, or send the case for review. Nected’s inference engine example: loan eligibility shows how that works in practice.

Insurance

Claims handling often depends on rule-based decisions. An inference engine can check claim amount, policy type, and prior history before routing the case. That keeps the process moving and avoids a lot of manual back-and-forth.

Healthcare

Clinical decision support is another strong fit. The engine can evaluate symptoms, thresholds, and patient context, then surface the next step for a clinician. It does not replace a doctor. It just removes some of the obvious noise.

E-commerce

Personalisation and discount logic are a natural match. Nected can trigger offers based on customer segment, cart value, or browsing behavior. The logic stays editable, which matters when promo rules keep changing.

Fraud detection

Fraud scoring usually needs quick checks across multiple signals. An inference engine can combine amount, location, timing, and account history to flag suspicious activity. For a closer look, see our real-time inference for fraud detection.

Frequently Asked Questions

1. What is an inference engine?

An inference engine is the part of a rule-based system that applies logical rules to facts and produces a decision or conclusion.

2. How does an inference engine function within a rule-based system?

It matches incoming facts against known rules, selects the ones that apply, and runs the right action.

3. What are the types of inference engines?

The main types are forward chaining, backward chaining, and hybrid chaining.

4. How does Nected utilize inference engines?

Nected uses inference to evaluate rules, automate decisions, and support workflow execution through API-based rule processing.

5. What benefits does Nected's inference engine offer?

It gives teams explainable decisions, faster rule evaluation, better scale, and easier rule updates.

6. How does forward chaining differ from backward chaining in Nected?

Forward chaining starts with facts and moves toward an outcome. Backward chaining starts with the outcome and works backward to confirm the needed conditions.

7. Can Nected's inference engine handle large-scale systems?

Yes. It is built for large rule sets and high-volume decision flows.

8. How does Nected ensure the accuracy of its inference engine?

Through rule versioning, real-time evaluation, and explainable decision logs.

9. What industries can benefit from Nected's inference engine?

Finance, insurance, healthcare, e-commerce, and fraud detection are the obvious ones.

10. How does Nected's inference engine integrate with existing workflows?

It integrates through APIs and can sit inside existing approval, routing, and decision workflows without much friction.

.svg)

.png)

.webp)

%2520(3).webp)

.svg.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

_result.webp)

%20(1).webp)